Apache Logs BreakdownĮvery time you start a new project that involves Solr, you must first understand your data and organize it into fields. Next, you will learn all the details behind the above. After the indexed documents appear in Solr, Hue’s Search Application is utilized to search the indexes and build and display multiple unique dashboards for various audiences. MorphlineSink parses the messages, converts them into Solr documents, and sends them to Solr Server. Syslog Source sends them to Kafka Channel, which in turn passes them to a MorphlineSolr sink. They are then forwarded to a Flume Agent, via Flume Syslog Source. For our purposes, Apache web server log events originate in syslog.

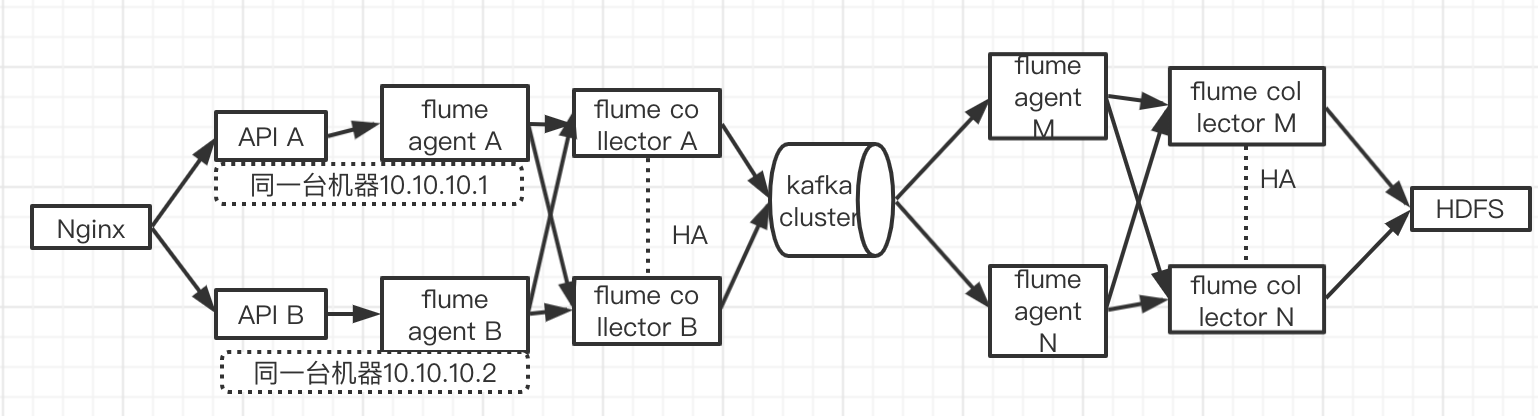

The high-level diagram below illustrates a simple setup that you can deploy in a matter of minutes. This implementation is based on open source components such as Apache Flume, Apache Kafka, Hue, and Apache Solr.įlume, Solr, Hue, and Kafka can all be easily installed using Cloudera Manager and parcels (the first three via the CDH parcel, and Kafka via its own parcel). In this post, you will explore a sample implementation of a system that can capture Apache HTTP Server logs in real time, index them for searching, and make them available to other analytic apps as part of a “pervasive analytics” approach. Recently, however, technology has matured quite a bit and, today, we have all the right ingredients we need in the Apache Hadoop ecosystem to capture the events in real time, process them, and make intelligent decisions based on that information. In the past, it is cost-prohibitive to capture all logs, let alone implement systems that act on them intelligently in real time. Whether your firm is an advertising agency that analyzes clickstream logs for customer insight, or you are responsible for protecting the firm’s information assets by preventing cyber-security threats, you should strive to get the most value from your data as soon as possible.

Web server logs, application logs, and system logs are all valuable sources of operational intelligence, uncovering potential revenue opportunities and helping drive down the bottom line. If you are not looking at your company’s operational logs, then you are at a competitive disadvantage in your industry. This simple use case illustrates how to make web log analysis, powered in part by Kafka, one of your first steps in a pervasive analytics journey. Cloudera recently announced formal support for Apache Kafka.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed